RevGen Achieves “World Class” NPS Designation in 2026!

Our 2026 NPS score of 84 is considered "World Class" client experience

Read More

The path to agentic AI isn't a leap—it's a climb built on data quality foundations. Proactive monitoring transforms operations today while enabling autonomous capabilities tomorrow.

Author: Alex Champagne

In our previous article on Agentic AI, we explored how autonomous systems are transforming enterprise operations by making decisions and taking actions with minimal human intervention. However, there’s a critical prerequisite that often gets overlooked in the rush toward AI adoption: data quality.

Without reliable, complete, and trustworthy data, even the most sophisticated agentic systems will fail, or worse, automate poor decisions on a large scale. This article examines how robust data quality monitoring serves as both a business imperative today and an essential foundation for tomorrow’s agentic AI capabilities.

For organizations serving thousands or millions of users, data quality issues rarely announce themselves clearly. Instead, they manifest as user complaints, incomplete analytics, failed processes, or degraded application performance—symptoms that point to an underlying data health problem without revealing the root cause.

Consider an enterprise application serving 50,000+ employees monthly. When users begin reporting incomplete data or missing functionality, the traditional response involves reactive troubleshooting. IT teams investigate individual tickets, developers hunt for bugs, and business users frustratingly work around gaps. This approach is time-consuming, inconsistent, and fundamentally unsustainable as data volumes and system complexity grow.

The challenge intensifies when multiple data pipelines feed applications from various sources, each with different refresh schedules, validation rules, and failure modes or conditions. Without systematic monitoring, teams remain blind to patterns: the same pipeline might fail repeatedly for specific business units; certain data quality tests might consistently fail on particular days of the week; or resolution times might vary dramatically based on the type of issue.

This reactive posture isn’t just inefficient. It’s incompatible with agentic AI.

While data quality is not the only necessary component of a successful Agentic AI deployment, if an organization cannot reliably detect, diagnose, and resolve data quality issues through human oversight, delegating those decisions to autonomous agents becomes not just premature but potentially harmful.

The path forward requires shifting from reactive firefighting to proactive monitoring. This means building systems that continuously test data health, identify failures as they occur, and surface patterns that enable faster resolution.

As described in our previous article about data quality, a mature framework includes:

Comprehensive Test Coverage

Structured Failure Logging

Intelligent Analysis and Visualization

This approach transforms data quality from a cost center into a strategic capability. Teams shift from asking “What broke?” to “Where are our systemic weaknesses?” and from “How do we fix this instance?” to “How do we prevent entire categories of failures like this?”

RevGen implemented this approach for one of the nation’s largest telecommunications companies. Our client operated an employee development platform serving over 50,000 users monthly across the globe, but persistent data quality issues were degrading the user experience and consuming significant support resources.

The Challenge

Users reported incomplete or missing data with no obvious pattern. The development team knew that certain conditions triggered pipeline failures, however without systematic tracking, they couldn’t identify which business units were most affected, which failure types were most common, or how quickly different issues were resolved.

The Implementation

RevGen partnered with the client to design and deploy a comprehensive data quality monitoring solution:

The centerpiece of the solution was an interactive Power BI dashboard that transformed raw failure logs into actionable intelligence. Rather than a simple report from a single data table, the dashboard synthesized multiple data sources and performed complex, conditional datetime calculations to surface patterns invisible in the raw data.

The Results

The dashboard enabled the team to:

The impact was immediate and quantifiable. User support tickets decreased as issues were caught and resolved. The development team reclaimed time previously spent on reactive troubleshooting, redirecting those resources toward platform enhancements. Client leadership praised the solution for bringing transparency and accountability to what had been an opaque problem.

This real-world example demonstrates a critical truth about the path to agentic AI: automation requires foundation-building, not just algorithmic sophistication.

Our data quality monitoring system represents a crucial intermediate step on the journey to autonomous operations. Today, human analysts review the dashboard, identify patterns, and decide which teams to contact. Yet the system is already structured to support agentic capabilities:

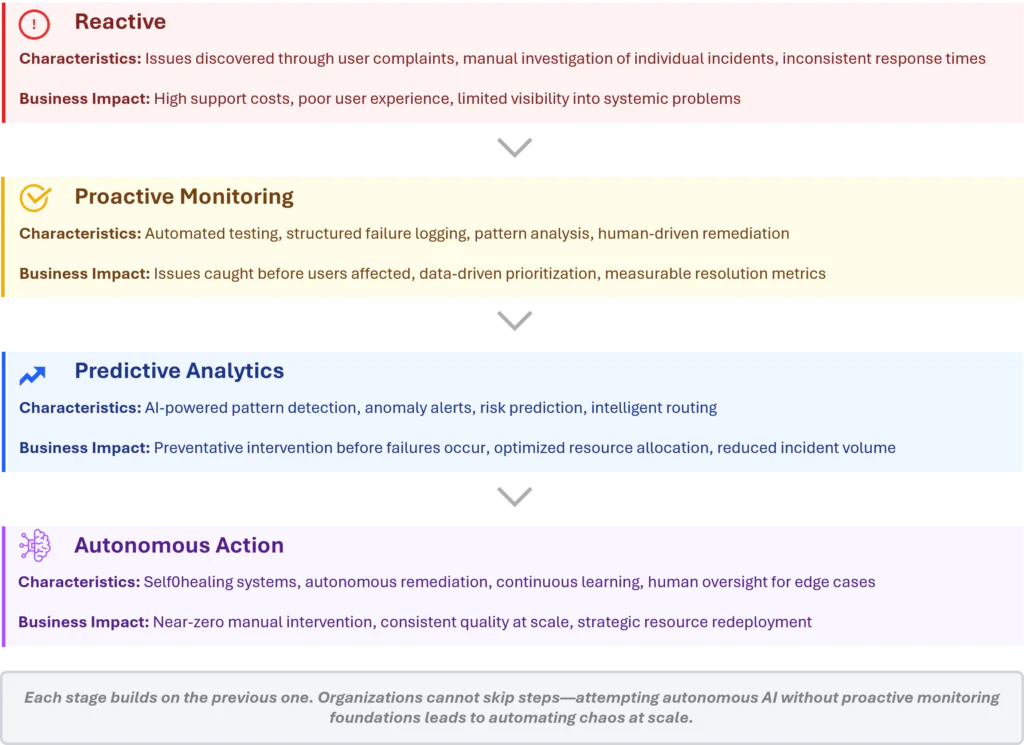

This progression from reactive → proactive monitoring → predictive analytics → autonomous action represents the realistic path to agentic AI in enterprise operations. Each step builds capabilities and confidence while maintaining human oversight where it matters most.

The Journey from Reactive to Autonomous, Visualized

Organizations that skip these intermediate steps by attempting to deploy agentic systems without first establishing data quality foundations risk automating chaos at scale.

Autonomous agents are only as good as the data they consume and the processes they automate.

If your data pipelines fail unpredictably and your organization lacks systematic ways to detect and resolve those failures, an AI agent will simply make poor decisions, faster.

As enterprises accelerate their AI investments, the temptation is to focus on the exciting frontier capabilities such as autonomous decision-making. However, the organizations that will truly succeed with agentic AI are those investing in the less glamorous foundation work: data quality monitoring, structured logging, pattern analysis, and incremental automation.

This isn’t just a technical best practice. It’s a winning business strategy, too. Every dollar spent on data quality monitoring today drives reduced support costs, faster issue resolution, and improved user experience, while laying essential groundwork for agentic capabilities tomorrow.

The question isn’t whether to invest in data quality, but whether you can afford to deploy AI without it.

RevGen helps our clients establish robust data quality frameworks that deliver immediate value while enabling future agentic AI capabilities. Whether you’re struggling with data pipeline reliability, preparing for AI adoption, or looking to automate existing monitoring processes, our team brings deep expertise in data quality, analytics, and AI enablement. Contact us to discuss how we can help transform your data operations.

Get the latest updates and Insights from RevGen delivered straight to your inbox.